AI Services

AI SDLC Assessment Services

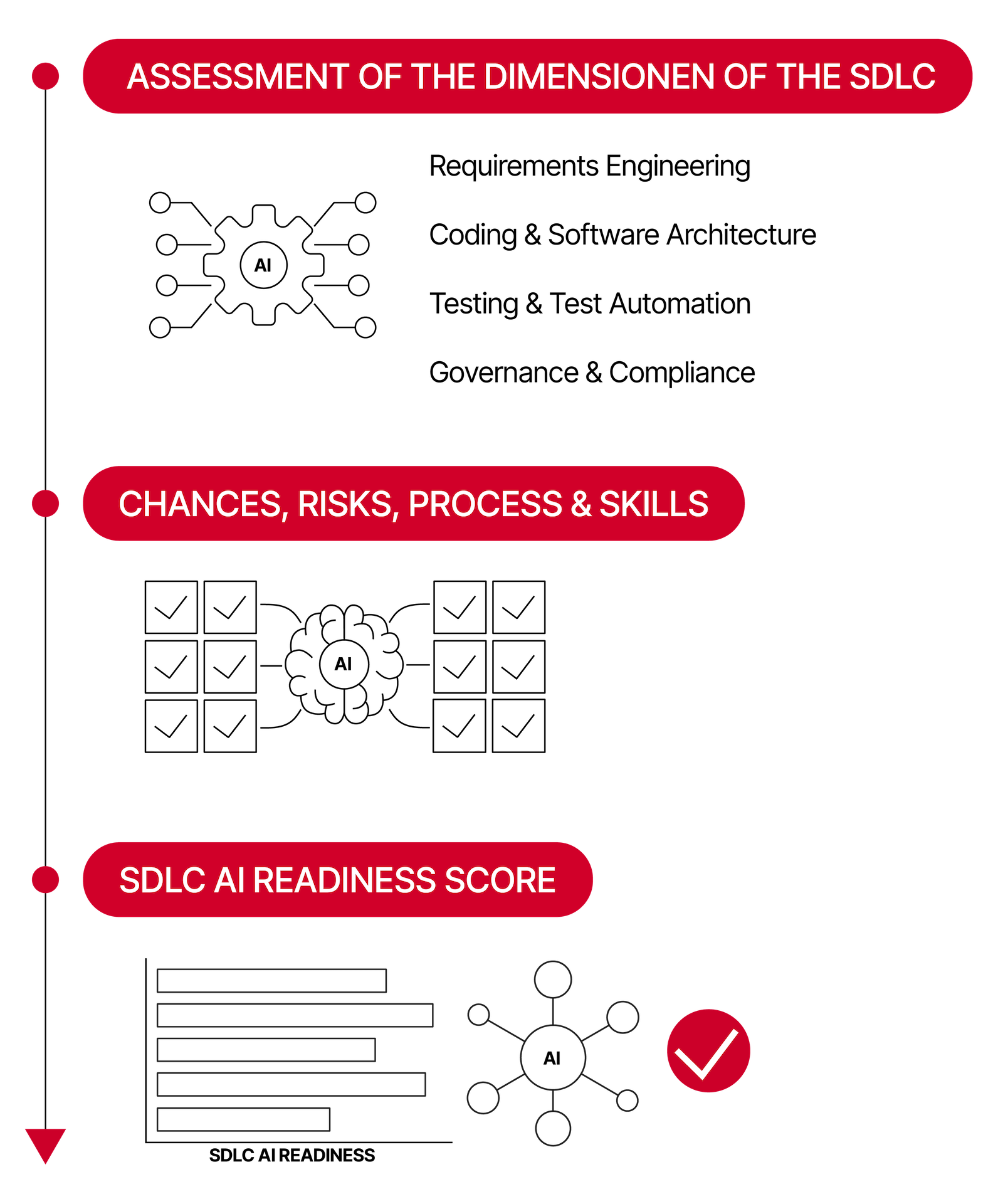

AI is fundamentally transforming the software engineering lifecycle. Tools like GitHub Copilot, Cursor, Claude Code and ChatGPT are embedded in developer workflows. The key question for engineering leaders is no longer whether AI is being used — but where it actually improves productivity and quality, and where it introduces hidden risks: technical debt, architecture gaps, reduced test effectiveness, and compliance blind spots.

We are your trusted partner to assess where AI helps your software engineering lifecycle — and where it hurts.

OUR AI SDLC SERVICES

02

AI Opportunity & Risk Mapping

AI in Requirements Engineering: Assessment of opportunities such as automated elicitation support, consistency checking, and traceability matrix generation versus risks such as hallucinated requirements, loss of domain context, and traceability gaps.

AI for Software Architecture & Coding: Analysis of architectural decision support, AI-assisted code review, and technical debt detection versus risks such as superficial architecture suggestions, blind spots in non-functional requirements, and unmaintainable AI-generated code.

AI in Testing & Test Automation: Examination of AI-generated test cases, coverage optimization, and self-healing automation versus risks such as false coverage confidence, circular quality (AI testing AI code), reduced human judgement, and test case bloat.

Beyond Pilots - Scaling AI to Production: Evaluation of pilot evaluation frameworks, governance scaling, and AI-specific KPIs versus risks that pilot success does not translate to production, governance gaps at scale, and unmeasured technical debt accumulation.

AI for Software Architecture & Coding: Analysis of architectural decision support, AI-assisted code review, and technical debt detection versus risks such as superficial architecture suggestions, blind spots in non-functional requirements, and unmaintainable AI-generated code.

AI in Testing & Test Automation: Examination of AI-generated test cases, coverage optimization, and self-healing automation versus risks such as false coverage confidence, circular quality (AI testing AI code), reduced human judgement, and test case bloat.

Beyond Pilots - Scaling AI to Production: Evaluation of pilot evaluation frameworks, governance scaling, and AI-specific KPIs versus risks that pilot success does not translate to production, governance gaps at scale, and unmeasured technical debt accumulation.

03

Governance Framework & Quality Management Plan

AI Usage Recommendations: Usage boundaries per SDLC phase, review requirements for AI-tools and generated artifacts, and human-in-the-loop processes.

Quality Metrics Framework: Establishment of metrics to measure AI impact over time, code quality delta, test coverage changes, review time, bug escape rate, and technical debt indicators.

Compliance Checklist: Mapping of your AI usage to EU AI Act risk classification, CRA, NIS2, GDPR, and applicable industry standards.

Risk Escalation & Monitoring: Definition of procedures for quality issues from AI-generated artifacts, KPIs, and review cadence to track AI impact on software quality over time.

Executive Presentation: Board-ready summary of findings, roadmap, and governance recommendations for management decision-making.

Quality Metrics Framework: Establishment of metrics to measure AI impact over time, code quality delta, test coverage changes, review time, bug escape rate, and technical debt indicators.

Compliance Checklist: Mapping of your AI usage to EU AI Act risk classification, CRA, NIS2, GDPR, and applicable industry standards.

Risk Escalation & Monitoring: Definition of procedures for quality issues from AI-generated artifacts, KPIs, and review cadence to track AI impact on software quality over time.

Executive Presentation: Board-ready summary of findings, roadmap, and governance recommendations for management decision-making.

04

Ongoing Advisory & Follow-Up Services

Quarterly Retained Advisory: Regular AI quality checks, updated recommendations, and compliance reviews as tools and regulations evolve.

Implementation Support: Execution of roadmap recommendations through existing consulting and implementation services, requirements engineering, architecture, testing, test automation, and more.

Workshops & Coaching: AI-focused sessions: Prompt Engineering for Testers, AI-Aware Requirements Engineering, AI Governance for Architects.

Compliance Audits & Contract Advisory: Recurring assessments as EU AI Act and NIS2 requirements evolve, as well as support in selecting and adopting AI products and services.

Implementation Support: Execution of roadmap recommendations through existing consulting and implementation services, requirements engineering, architecture, testing, test automation, and more.

Workshops & Coaching: AI-focused sessions: Prompt Engineering for Testers, AI-Aware Requirements Engineering, AI Governance for Architects.

Compliance Audits & Contract Advisory: Recurring assessments as EU AI Act and NIS2 requirements evolve, as well as support in selecting and adopting AI products and services.

01

Inventory & Software Quality Baseline

AI Tool Landscape Analysis: Identification of AI tools in use across your teams, governed usage vs. shadow AI, and existing policies.

SDLC Process Mapping: Capture of current processes in requirements engineering, software architecture, development, testing, and deployment with identification of handoffs, bottlenecks, and quality gates.

Software Quality Baseline: Establishment of the pre-AI baseline of existing quality gates, test coverage levels, defect rates, and review processes to measure future AI impact.

Team Maturity Assessment: Evaluation of prompt engineering competencies, AI literacy, developer team sentiment, and existing guidelines or training.

Compliance Scan: Review of GDPR and CRA readiness, EU AI Act implications, IP protection policies, data residency requirements, NIS2 exposure, and relevant ISO standards.

SDLC Process Mapping: Capture of current processes in requirements engineering, software architecture, development, testing, and deployment with identification of handoffs, bottlenecks, and quality gates.

Software Quality Baseline: Establishment of the pre-AI baseline of existing quality gates, test coverage levels, defect rates, and review processes to measure future AI impact.

Team Maturity Assessment: Evaluation of prompt engineering competencies, AI literacy, developer team sentiment, and existing guidelines or training.

Compliance Scan: Review of GDPR and CRA readiness, EU AI Act implications, IP protection policies, data residency requirements, NIS2 exposure, and relevant ISO standards.

Benefits

Get your expert consultation today

Request an expert on-demand consultation to boost your development processes.

Talk to an Expert

20+ years of Software Quality experience